Monetisation is how content creators earn money through social media and on video-sharing platforms. Views and engagement earn creators money, encouraging some to utilise dishonest practices to receive more activity on their posts.

Learn about online monetisation and how you can keep your child safe.

Summary

- Monetisation is when content creators earn money from engagement on their content.

- Creators can also monetise their content through sponsored posts and paid partnerships.

- Social media platforms use algorithms to decide what users see, prioritising posts with engagement.

- Some users share rage bait to provoke users into interactions, driving engagement.

- Content designed to cause emotional reactions may negatively affect wellbeing, especially for children.

- There are steps you and your child can take to limit the negative impacts of clickbait and rage bait.

- Find supporting resources to help you deal with monetisation and the algorithm.

What is monetisation?

Monetisation is when content creators earn money from engagement and activity on their content. Creators earn money through views, which means that content with higher engagement (e.g., likes, comments and shares) can reach more people and earn more.

Payment often comes directly from the platform, as they will receive more advertising revenue due to increased activity, and some of this is passed on to creators. Different platforms offer their own percentage of earnings for content creators.

Because creators can earn money through engagement, some may attempt to drive engagement through whatever means possible. This can lead to them spreading disinformation or rage baiting people so that they click and comment on their posts.

How monetisation works on different platforms

Meta’s social platforms reward content creators for the number of views and watch time they get on videos and reels, as these lead to more ad impressions.

Brand deals and influencer marketing are also popular monetisation methods, with users sharing sponsored content or paid partnerships in exchange for payment and gifts.

The largest source of income for TikTok creators is brand deals and sponsored content. Creators can promote products in return for payment and can also earn commission through affiliate links by directing viewers to purchase items in the TikTok Shop.

The creator reward system also pays video creators based on the views and watch time they get on videos.

The YouTube Partner Program is the primary income source for most YouTube creators. This allows users to run adverts before, during and after their videos, and they receive a share of the advertising revenue.

YouTube creators can also earn money through sponsored content by promoting brands and products within their videos.

Monetisation via sponsorships

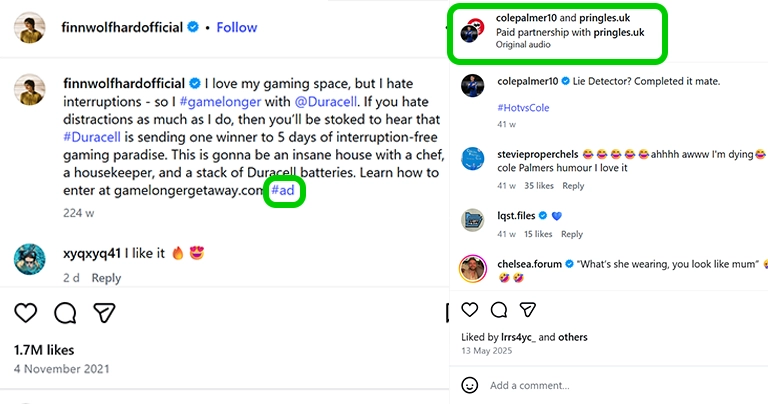

Creators can also monetise their content through sponsored posts and paid partnerships. Brands will often choose to advertise through creators with the highest levels of engagement. This is because there’s a greater chance of a large number of people seeing the advert and potentially purchasing the product.

Research from Ofcom shows that some children struggle to recognise sponsored posts on social media. Some children say an influencer or celebrity is promoting a product because:

- the influencer thinks the product or brand is cool to use;

- the influencer wants to share information with their followers.

Without recognising sponsored posts, young people could feel pressure to buy something they don’t need or can’t afford. This is because they might trust the creator’s recommendation, thinking it’s their genuine opinion, without realising it is part of an advertising deal.

Sponsored posts on social media platforms must be labelled as paid promotion, but children could miss this labelling.

How do the algorithms work?

Social media and content platforms don’t show posts in the order they’re shared. Instead, they use algorithms to customise a user’s feed. These algorithms prioritise content that gets attention, such as posts people like, comment on, watch for longer or that are popular with others they follow.

Because interacting with content leads to similar posts being shown more often, children could find themselves in an echo chamber. This is when a user is mainly shown posts that support one point of view, while contrasting perspectives are hidden from their feed. This can reduce critical thinking, strengthen extreme views and increase the risk of falling for misinformation.

Algorithms use engagement signals such as likes, comments and shares to learn if content is valuable. Even comments that call out content as hateful, negative or incorrect tells the algorithm that people like it. Content that receives high engagement is then shown to more users.

Because engagement on emotive posts tends to be higher, it can motivate users to make inflammatory posts. Creators might also post low effort content, sometimes created using AI, or jump on dangerous trends or challenges in pursuit of views and revenue.

Low-quality AI content, sometimes labelled ‘AI slop’, has become widespread on social media. This is largely due to how quickly and easily creators can generate the content. The algorithm might also sometimes prioritise this content because of the various comments calling the content out as AI-generated, which the algorithm sees as high engagement. Some platforms are working to prioritise managing this content.

What is rage bait?

Rage bait has become increasingly common in recent years, with it being named the Oxford Word of the Year for 2025. It is content created specifically to anger people and cause outrage, provoking them to interact with a post and drive engagement.

This content is not always hateful and may focus on inoffensive topics, such as cooking a strange meal or acting intentionally annoying. However, it can also feature more serious issues, such as making controversial political statements. This can include spreading disinformation.

Rage bait vs. clickbait

Rage bait and clickbait both try to trick people into clicking on a post. However, rage bait is more effective for monetisation as users are more likely to leave comments criticising the original post. This then starts a snowball effect, as more comments mean more users will see the post, possibly commenting themselves, which can help increase revenue for the original poster.

Impact of monetisation

When a creator posts content just to get clicks, views or shares, they might sometimes exaggerate claims or intentionally upset viewers. This can negatively impact wellbeing, especially for children. The following are some ways this type of content can affect children.

Harm to wellbeing

Repeated exposure to conflict and controversy can harm a child’s overall wellbeing. Comment sections of these posts are often filled with arguments and hateful language directed at the original poster or other commenters. This can desensitise children to conflict and disrespectful language, possibly encouraging them to repeat this behaviour.

Children might also comment on these posts themselves and receive hateful responses from other users. This can impact their enjoyment of online platforms and make them less likely to call out negative behaviour in future.

Engagement with these posts will also influence the algorithm to show children similar posts, which can make the topic difficult to escape.

Disinformation

A lot of rage bait spreads false or misleading information, encouraging people to react either by correcting it or because they are shocked by the content. Children who view these posts might believe the information as true, taking it at face value. They might then share the information with others who call them out or share the information more widely. If posts spread false information about medicine or science, children are also at risk of harm to their health or physical wellbeing.

In some cases, disinformation can work to radicalise a child through exposure to content which spreads hate and becomes part of their world view and values.

Content creation risks

Children might see monetisation as an opportunity to earn ‘easy’ money, especially if their family struggles with finances. To drive engagement, they might overshare, take part in dangerous challenges or post controversial content that causes outrage. All these engagement tactics expose children to risks.

Children might also experience stress and anxiety if their content doesn’t get as many views or likes as they had hoped for, or if they receive negative feedback.

What steps can parents take to keep their children safe?

If your child uses social media, video-sharing platforms or live streaming services, they will likely come across content that takes advantage of the algorithm for monetisation. However, there are steps you and your child can take to limit the negative impacts.

Start with conversation

Helping your child understand how monetisation works online is about building awareness, not fear. From an early age, simple conversations can help children recognise that some content is designed to keep people watching, clicking or reacting because it generates income.

As children get older, these discussions can expand to include how likes, views, ads and algorithms influence what appears in their feeds, and why some content aims to provoke strong emotions.

Supporting your child to ask questions about why content was created, how it makes them feel and who benefits from it can help them develop critical thinking skills to make more informed choices online.

Train the algorithm

Help your child ‘train’ their algorithm by avoiding engagement with controversial posts. Additionally, encourage your child to follow positive accounts that post uplifting content, rather than ones that attempt to create outrage and controversy. This can help them see less content that causes confusion, anger or upset.

You can also teach them to use the ‘Not interested’ feature on content that makes them uncomfortable. This will help prevent similar content appearing on their feed as often.

However, this is not a perfect system. Your child might still see trending rage bait and disinformation, and they might need to tell the algorithm they’re not interested several times.

Training the algorithm by platform

To mark a post as ‘Not interested’ on Facebook:

Step 1 – Click on the 3 horizontal dots in the top right corner of a post.

Step 2 – Select ‘Not interested’ from the drop-down menu.

Fewer posts like this will be shown in the feed in future.

To mark a post as ‘Not interested’ on Instagram:

Step 1 – Click on the 3 horizontal dots in the top right corner of a post.

Step 2 – Select ‘Not interested’ from the menu.

Step 3 – If you want, select the reason why you marked it as ‘Not interested’.

Fewer posts like this will be shown in the feed in future.

To mark a post as ‘Not interested’ on TikTok:

Step 1 – Hold down the screen on the video you are not interested in.

Step 2 – When the pop-up menu appears, select ‘Not interested’.

Fewer posts like this will be shown in the feed in future.

To mark a video as ‘Not interested’ on YouTube:

Step 1 – Click on the 3 vertical dots to the right of a video in your feed.

Step 2 – Select ‘Not interested’ or ‘Don’t recommend channel’ from the menu.

Step 3 – If you want, you can select the reason why you marked it as ‘Not interested’, with a choice of ‘I’ve already seen the video’ or ‘I don’t like the video.

Fewer videos like this will be recommended in future.

Develop critical thinking skills

Teach your child to think critically about the content they see on the content creation platforms they use. Before they engage with a post, they should first consider why the poster made it and whether it’s true. For example, a content creator might share false statements from political figures they dislike in order to harm their reputation or fake news stories that demonise a certain group.

Consider your child’s age and maturity

Before allowing your child to post their own content for monetisation, make sure they are mature enough to share safely online. If your child is under 13, discourage them from trying to make money online, for example, as they are unlikely to have the critical thinking skills needed to understand the risks of sharing content publicly.

As an alternative, consider how you as a parent can support their interests while keeping them safe, perhaps by making content together.

Your child should also only post on platforms if they meet the minimum age requirements. If they do meet the age requirements, it’s still important to monitor their activity and check-in regularly.

For teens aged 16 or over, consider how you can still support their wellbeing, money management and critical thinking when they share content for monetisation. Keep conversations open and stay aware of what they’re sharing and who is interacting with their content.

Educate your child about safe sharing

If your child wants to begin creating content in the hopes of earning money, you should ensure they know how to share safely. Talk to them about the risk of engaging in online challenges and tell them not to share any personal information such as their real name, address or school. Warn your child against posting rage bait and disinformation but know how to support them if they receive negative comments.

Set up them up for safety

Setting parental controls on your child’s social media and video-sharing platforms lets you filter harmful and extreme content from their feed and limit screen time to prevent excessive scrolling. This can help reduce exposure to disinformation and controversial posts.

Explore some useful platform guides below: