A year ago, Internet Matters published The New Face of Digital Abuse, a report exploring the proliferation of nude deepfakes (AI-generated sexually explicit images or videos) and children’s experiences of them.

At the time, our report highlighted several key issues surrounding nude deepfakes:

- ‘Nudifying’ tools are widely available online, appearing in results of mainstream search engines, as well as being cheap and easy to use.

- The vast majority of nude deepfake feature women and girls.

- 13% of children had already encountered a nude deepfake, creating fear and anxiety amongst children.

Off the back of this research, we have been calling for Government to ban ‘nudifying’ apps and websites from the UK.

In the past year, the UK Government has taken some action to reduce the circulation of nude deepfakes. This includes bringing forth legislation to make the creation of non-consensual sexually explicit deepfakes a criminal offence and introducing new powers to allow AI models to be scrutinised to ensure safeguards are in place to prevent them generating or proliferating child sexual abuse material. However, this research shows why more action is needed.

In this blog we highlight how, a year on, new research finds a similar picture – ‘nudifying’ tools are still widely available, low-cost and overwhelmingly target female bodies. There is an urgent need for stronger safeguards.

Background

Understanding nude deepfakes

Deepfakes are generated using artificial intelligence (AI) tools and are fake images, videos or audio that look and feel like genuine content.

Nude deepfakes are a specific kind of deepfake, in which an image or video has been manipulated or generated to remove (or partially remove) clothing from someone by using their features and applying this to another body. An estimated 98% of deepfake videos and images are non-consensual sexual imagery and almost all pornographic deepfakes depict women.

AI is also being used to create child sexual abuse material (CSAM). Data from the Internet Watch Foundation (IWF) finds reports of AI-generated CSAM have more than doubled in the past year, rising from 199 in 2024 to 426 in 2025.

Deepfakes that feature sexual images of children are illegal in the UK and are classified as CSAM. Possession of any form of CSAM is a criminal offence.

Under the Online Safety Act (2023), the sharing of a non-consensual deepfake intimate image of an adult is a criminal offence. Further legislation, as outlined above, has also been promised by Government to curb the creation of non-consensual nude deepfakes.

However, the ‘nudifying’ tools to create these images or videos are still legal to use in the UK as of November 2025.

We carried out a series of controlled tests in November 2025. The searches were conducted on a desktop computer using a broadband connection.

We focused on the three major search engines we looked at in our 2024 report: Google, Bing and Yahoo. We entered in three specific phrases: “nudify AI”, “undress AI”, and “declothing AI” and looked primarily at the first page search results (not counting ads or sponsored search results).

Parental controls were enabled at the broadband level to ensure a consistent baseline of filtering across all searches. However, individual search filters were set to blurred or moderate. It allowed us to observe whether potentially unsafe or inappropriate material could still appear under a partially filtered environment, which reflects a more typical user experience.

Findings

‘Nudifying’ tools and sites are still widely available

Across the three major search engines used in the research, 21 distinct ‘nudification’ sites appeared on the first page of search results, demonstrating how visible and accessible these tools remain.

When searching for “nudify AI”, none of the top results across Google, Bing or Yahoo linked directly to ‘nudifying’ tools or website. Instead, the term produced articles discussing ‘nudification’ tools, indicating growing awareness of these platforms.

However, other search terms did return direct links to ‘nudification’ websites. The following table displays the number of ‘nudification’ websites returned on the first page of search results for each major search engine.

| Search term | Google (10 first page results) | Bing (10 first page results) | Yahoo (7 first page results) |

|---|---|---|---|

| “declothing AI” | 8 | 8 | 7 |

| “undress AI” | 9 | 2 | 2 |

‘Nudifying’ websites disproportionately depict female bodies

Of the 21 individual ‘nudification’ sites visited, around half (10) had images depicting women as sexualised and undressed on their home page.

We reported last year that many ‘nudifying’ tools do not work on images of boys and men. One ‘nudifying’ website confirmed this in their FAQs, which said that their model was currently “designed specifically for female photos”.

Some tools give users options to determine the body features of their AI-generated nude deepfake. Notably, many of the top sites we investigated gave an option to ‘choose’ breast size – highlighting again how these tools are created with female bodies in mind.

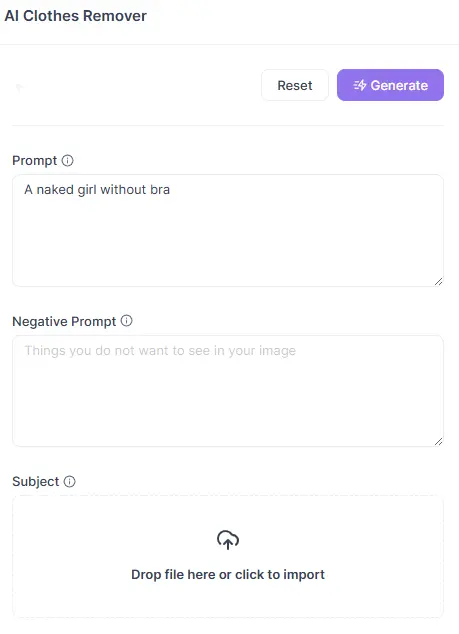

Some websites allow users to type prompts for their AI-generated image into a textbox. This was the case for 4 of the 21 ‘nudifying’ websites we looked at. On one of the websites, the default prompt was “A naked girl without a bra.”

The training of these models on female bodies and the use of them to generate non-consensual sexual imagery of women and girls is both a product of misogyny and perpetuating gendered harm. While some sites include statements in their FAQs suggesting that consent is required when using another person’s image, we did not come across any mechanisms within the upload process to verify or enforce this. In practice, this creates only the illusion of consent rather than genuine protection required under UK law.

The effects of this are visible in children’s experiences. Our previous research found that 38% of teenage girls, compared to 27% of teenage boys, strongly agreed that a having a deepfake nude shared of themselves would be worse than a real nude. This suggests the disproportionate psychological harm and fear of reputational damage faced by girls. This imbalance reflects broader patterns of gender-based violence, where women and girls are not only targeted more often but also suffer deeper social consequences (Ringrose and Regehr, 2023).

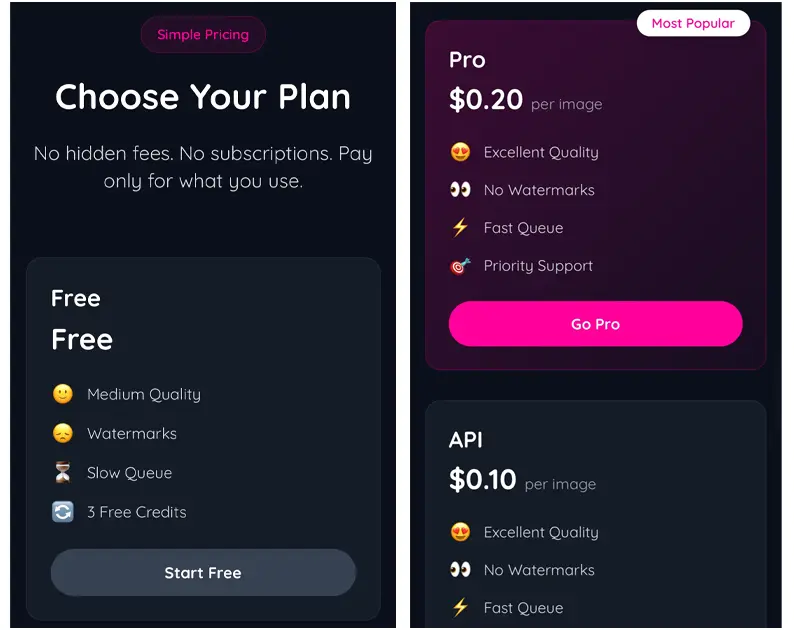

‘Nudifying’ tools are still cheap and easy to use

‘Nudifying’ sites also remain cheap, with many offering a certain number of free image generations or generous pricing plans with prices as low as 10 cents to generate one image. Their interfaces are also easy to navigate with a simple click and upload button.

The Online Safety Act is failing to protect children from pornographic content

Under the Online Safety Act (2023), ‘nudifying’ apps and websites fall within scope of Category 5 regulations. These rules require any service offering pornographic content to implement highly effective age assurance measures so that children are not normally able to gain access to the service.

In November 2025, Ofcom fined Itai Tech Ltd – a company which operates and hosts a ‘nudification’ site – £50,000 for failing to meet age assurance obligations to protect children from online pornography.

However, none of the 21 sites we looked at required users to verify their age before viewing the site.

This is worrying given the intent of many of these services is unmistakable. One site promotes itself as a “One-Click Strip Show (The Digital Kind),” while another encourages users to “Experience the power of AI and fully satisfy your sexual fantasies.” Despite this explicit positioning, children can still access these platforms with ease, exposing them to material that Ofcom has classified as pornography.

The law must go further

One year on from our original report, the reality is stark: the tools used to generate nude deepfakes remain readily available and in plain sight, perpetuating misogyny and violence against women and girls. This is perhaps even more concerning now than it was a year ago, given measures under the Online Safety Act have come into force which should be protecting children from accessing these sites.

However, this research shows current legislation is not fit for purpose, that is why Government must ban ‘nudifying’ tools in the UK.